Profanity Filtering in 2026: Text, Audio, and Video Approaches

Demand for profanity filtering has surged. Google Trends shows +900% year-over-year growth for terms like "profanity filter list" and "bad word filter." The drivers are clear: AI-generated content needs moderation, platform policies are tightening, and creators want control over their content's language.

But "profanity filtering" means very different things depending on whether you're working with text, audio, or video. This guide breaks down how each approach works, when to use which, and how to handle the audio/video case that traditional filters can't touch.

Text-Based Profanity Filters

How They Work

Text filters are the oldest and most common approach. At their core, they compare input text against a list of flagged words and take action — replacing with asterisks, blocking the message, or flagging for review.

The basic pipeline:

- Normalize the input — lowercase, strip punctuation, handle Unicode tricks (like replacing "a" with "á")

- Tokenize — split into words

- Match against a word list — exact match, substring match, or regex patterns

- Take action — replace, block, flag, or score

Common Word List Sources

Several open-source profanity lists are widely used:

- Google's "What Do You Love" list — leaked internal list covering ~450 English terms

- Carnegie Mellon's profanity list — academic dataset used in research

- Luis von Ahn's bad words list — compiled from CAPTCHA data, includes variations and misspellings

- Community-maintained lists on GitHub — constantly updated, many with category tags (profanity, slurs, sexual content, etc.)

Most production systems combine multiple lists and add their own domain-specific terms.

The Limitations of Word Lists

Word lists are a starting point, not a solution. They struggle with:

Context blindness. "Damn" in "damn, this is good" is mild. "Damn" in a direct insult is different. Word lists can't tell the difference.

The Scunthorpe problem. Substring matching flags "Scunthorpe" (English town), "assassin," "pianist," and "cocktail." Overly aggressive filters create false positives that frustrate users.

Evasion. Users quickly learn to bypass filters with creative spelling: "fck," "f*ck," "fμck," Unicode substitutions, zero-width characters, or Leetspeak ("sh1t"). Each evasion technique requires explicit handling.

New vocabulary. Slang evolves faster than lists can update. A word list from 2024 may miss terms that became offensive in 2025.

Multilingual gaps. A word list for one language is useless for another. Maintaining lists across 10+ languages is a major ongoing effort.

Modern Text Filter Approaches

To address word list limitations, modern text filters layer additional techniques:

- Phonetic matching (Soundex, Metaphone) — catches "phuck" and similar sound-alikes

- Levenshtein distance — flags words within N edits of a listed term

- ML classifiers — trained models that score text for toxicity beyond exact word matches (Google's Perspective API, OpenAI's moderation endpoint)

- LLM-based moderation — using language models to understand context and intent, not just vocabulary

The trend is clear: text filtering is moving from word lists toward AI-powered contextual understanding.

Audio Profanity Filtering

The Challenge

Audio profanity filtering is fundamentally harder than text filtering. You can't do a string comparison on a sound wave. The audio must first be converted to text (speech-to-text / transcription), then the text can be analyzed, and finally the audio must be modified to remove or replace the identified words.

This creates a three-stage pipeline:

- Transcription — Convert speech to text with word-level timestamps

- Detection — Identify which words to censor (word lists, patterns, or manual selection)

- Replacement — Replace the audio at those timestamps with a bleep sound, silence, or alternative audio

Why This Was Hard Until Recently

Before 2022, accurate word-level transcription required:

- Expensive commercial APIs (Google Speech, AWS Transcribe) at per-minute pricing

- Uploading audio to cloud servers (privacy concern)

- Post-processing to align word timestamps with the audio

The release of OpenAI's Whisper in 2022 changed the equation. Whisper provides:

- Word-level timestamps out of the box

- Support for 90+ languages

- Open-source models that can run locally (no upload required)

- Accuracy comparable to commercial APIs

This made it possible to build audio profanity filters that run entirely in the browser without sending files to a server.

How Audio Filtering Works in Practice

Using Bleep That Sh*t! as an example:

- Upload an MP3 or MP4 file — the file stays on your device

- AI transcribes every word — Whisper generates a word-by-word transcript with precise start/end timestamps

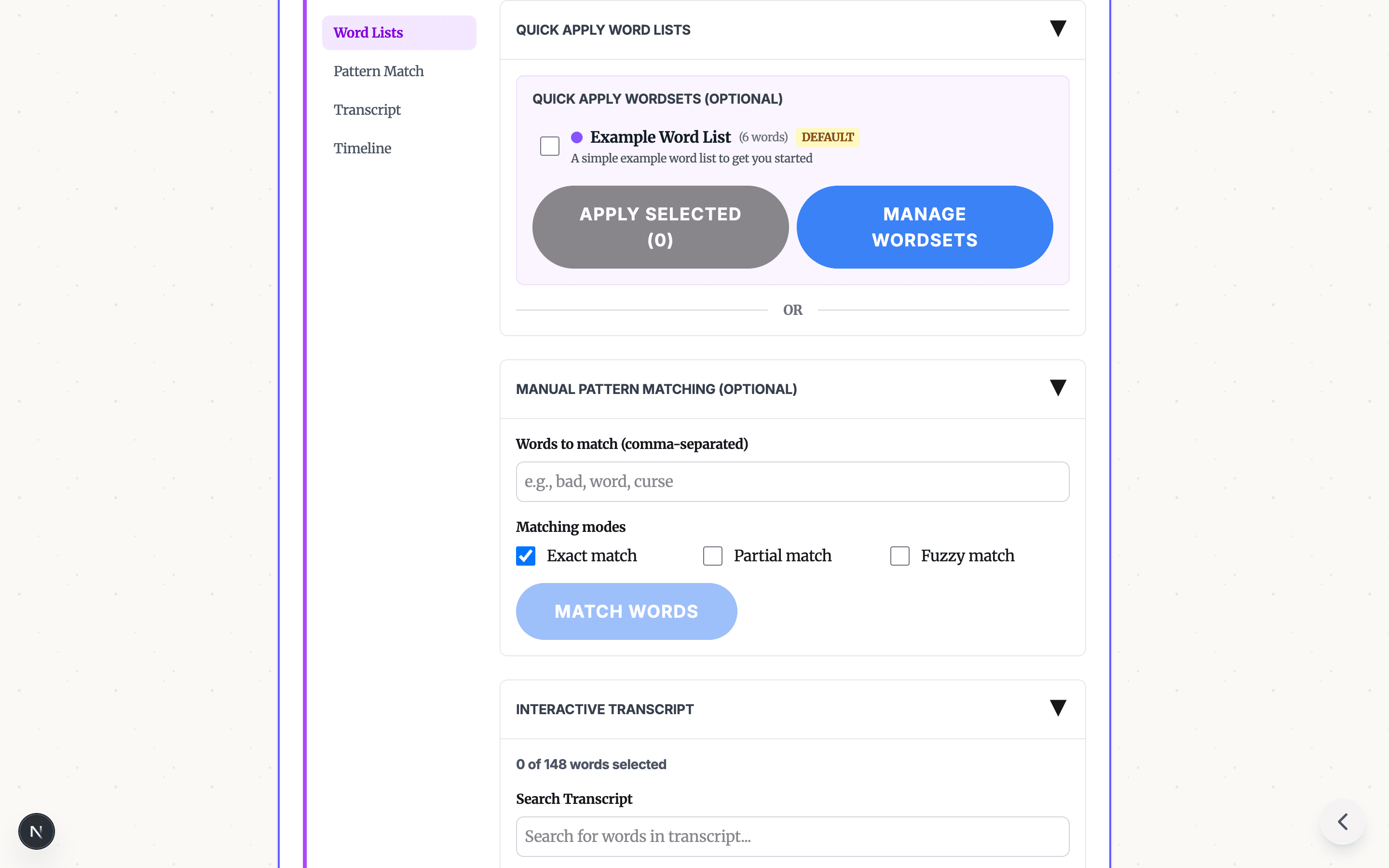

- Select words to censor using any combination of:

- Pre-built profanity word lists (one-click filtering)

- Pattern matching with exact, partial, or fuzzy modes

- Manual click-to-select on the transcript

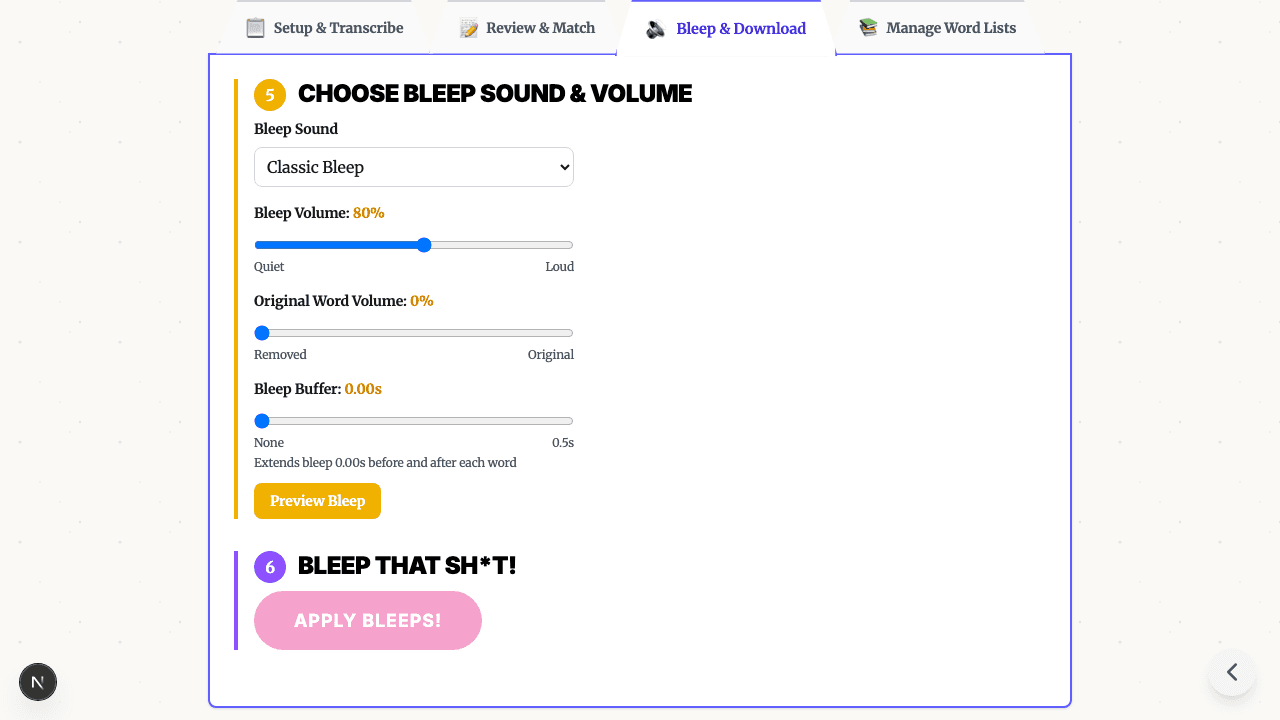

- Choose a replacement sound — classic bleep, silence, brown noise, or fun sounds. Read about the history of the bleep sound and why the 1000 Hz tone became the standard.

- Download the censored file — the tool replaces audio at the selected timestamps and exports the result

The key advantage over text-only filters: you get the original audio back with surgical modifications, not a transcript with asterisks.

Video Profanity Filtering

Audio Track Extraction and Remixing

Video profanity filtering is essentially audio filtering with an extra step. The pipeline:

- Extract the audio track from the video container (MP4, MOV, etc.)

- Run the audio filtering pipeline (transcribe → detect → replace)

- Remux — recombine the modified audio with the original video track

The video frames themselves are untouched. Only the audio is modified, which means:

- No quality loss on the video track

- No re-encoding of the video (faster processing)

- Perfect sync between video and modified audio

When Video Filtering Matters

Video profanity filtering is critical for:

- YouTube monetization — profanity in the first 30 seconds can trigger limited or no ads. Bleeping before upload protects revenue.

- Classroom use — teachers need to show documentaries and educational content without inappropriate language. See our teacher guide.

- Corporate presentations — clips from interviews, conferences, or media that need sanitizing for professional settings.

- Content repurposing — taking a podcast episode or stream VOD and creating a clean version for wider distribution.

Comparison: Text vs. Audio vs. Video Filtering

| Dimension | Text Filtering | Audio Filtering | Video Filtering |

|---|---|---|---|

| Input | Written text | Audio files (MP3, WAV) | Video files (MP4, MOV) |

| Detection method | String matching, ML models | AI transcription + word matching | AI transcription + word matching |

| Replacement | Asterisks, [redacted], removal | Bleep sound, silence, noise | Bleep sound, silence, noise |

| Privacy | Varies (may send to API) | Can run locally in browser | Can run locally in browser |

| Real-time capable | Yes | Emerging (with streaming ASR) | No (requires full file) |

| Context understanding | Improving (with LLMs) | Limited (transcription accuracy) | Limited (same as audio) |

| Common tools | Perspective API, regex filters | Bleep That Sh*t!, Cleanfeed | Bleep That Sh*t!, Premiere Pro |

| Cost | Often free or per-API-call | Free (browser-based) to per-minute | Free (browser-based) to per-minute |

When to Use Which Approach

Use text filtering when:

- You're moderating user-generated text (chat, comments, forums)

- You need real-time filtering during input

- You want to score/flag content for human review

- You're processing high volumes of text (millions of messages)

Use audio filtering when:

- You have podcast episodes, voice recordings, or music with profanity

- You want to create clean versions of audio content

- Privacy matters — browser-based tools keep files local

- You need word-level precision (not just muting entire sections)

Use video filtering when:

- You're preparing video for YouTube, TikTok, or other platforms with language policies

- Classroom or corporate use requires clean video content

- You want to maintain video quality while only modifying the audio track

- You need a quick alternative to manual editing in Premiere or CapCut

Building Your Own Profanity Word List

Whether you're filtering text or using word lists for audio/video censoring, here are practical tips:

Start with an established base list. Don't build from scratch — use one of the open-source lists mentioned above and customize from there.

Categorize by severity. Not all profanity is equal. Tag words as mild, moderate, or severe so you can apply different filtering levels for different audiences.

Include common variations. For each base word, include plural forms, verb conjugations, and common misspellings. Partial matching and fuzzy matching in tools like Bleep That Sh*t! help catch variations automatically.

Test against real content. Run your list against sample content and check for false positives (legitimate words caught) and false negatives (profanity missed). Adjust accordingly.

Review and update quarterly. Language evolves. Set a calendar reminder to review your list and add new terms that have emerged.

Frequently Asked Questions

What is a profanity filter? A profanity filter is a tool that detects and removes or replaces inappropriate language. Text-based filters scan written content against word lists. Audio and video filters use AI transcription to identify spoken words and replace them with bleep sounds or silence.

Are profanity word lists reliable? Word lists catch known terms but struggle with misspellings, slang, new terms, and context. Modern filters combine word lists with AI models to improve accuracy, but no filter is 100% reliable. Human review remains important for edge cases.

Can I filter profanity from audio and video files? Yes. Tools like Bleep That Sh*t! use AI to transcribe speech, then let you select words to censor. The tool replaces selected words with bleep sounds and exports the cleaned file — all in your browser.

Do profanity filters work with languages other than English? Text-based filters need language-specific word lists. AI-based audio filters like Whisper support 90+ languages for transcription, though word detection accuracy varies by language and dialect.

Try Audio and Video Profanity Filtering

Text filters handle the written word. For audio and video, you need a different approach. Try Bleep That Sh*t! free → — upload your file, let AI find the words, select what to censor, and download. No account, no upload to servers, no software to install.

Need to process longer files? Bleep Studio offers cloud-powered transcription for audio and video files up to 2 hours. See plans.

READY TO BLEEP YOUR CONTENT?

Try our free in-browser tool — no uploads required, 100% private processing.

BLEEP YOUR SH*T!Related Articles

AI Profanity Filter: How Automatic Audio & Video Censoring Works

How AI profanity filters automatically detect and bleep swear words in audio and video. Free browser tool uses Whisper AI for instant censoring — no manual editing.

The Bleep Censor Sound Effect: History, Science, and How to Add It to Your Videos

What is the bleep censor sound effect? Learn where the iconic TV bleep comes from, why it works so well, and how to automatically add bleep sounds to your own videos for free.

How to Bleep Out Words in Any Video (Free, No Download)

Bleep out swear words in any video for free. No download or signup required. Upload, select words to censor, and export in seconds.